TVs and monitors seem to serve the same purpose, but they’re clearly different. With smart TV’s price points going down while quality has gone up, you may be wondering if you can simply use a smart TV as your computer monitor. But what you might be missing out on is the optimum viewing experience depending on what you are using the device for. So what are the differences between computer monitors and Smart TVs anyway?

The key differences between computer monitors and Smart TVs are that monitors have a higher resolution and refresh rate, while a smart TV has built-in streaming and typically higher brightness. Monitors are better for gaming and detailed computing, while TVs excel at content consumption.

The technology that drives both monitors and TVs have somewhat converged. While you may still find exotic tech, TVs and computer monitors will be based on LED screen technology for the most part. But before you decide that a TV or a monitor will be just fine for whatever you might be viewing, you should be aware of some key differences between the devices.

Main Differences Between Computer Monitors and TV Screens

While the technology that powers both devices is relatively similar, there are some differences between monitors and TVs (our guide on setting up an Xbox with a monitor). They can indeed be used interchangeably, but you may run into problems if you’re using one for what the other is designed for.

For the average consumer, these may not be profound, however, If you’re a creative professional or a gaming enthusiast, they may mean a whole lot. Here are some key differences between the two (by the way, we have another guide on the differences between smart and regular TVs):

- Refresh Rate – Monitors will usually have a higher refresh rate. This can be crucial if you are gaming with a fast-paced game or using your screen for video editing, where every split second of a frame matters.

- True Colors – Monitors display the true color from the computers. On the other hand, TVs often add features to your screen to make it more viewable for movies and shows. This can distort the real color, adding a complication if you’re doing video editing.

- Sound – TVs will almost always come equipped with a speaker system. Monitors usually will not; they depend on your computer speakers or headphones.

- Inputs – While they will both share similar inputs, like HDMI or VGA, a monitor will typically have fewer I/O options. TVs usually have more I/O options because they need to be able to work with any video equipment you already have on hand, while a monitor might expect you to be a little more savvy and use the exact right cable.

- Size – Another factor that separates the two types of screens is size. TVs come in all sizes, from small to absolutely huge. Monitors will usually be smaller, although some gaming monitors can be larger, especially in terms of ultrawide aspect ratios. What this translates to is the pixel density. A 24-inch 4k monitor and a 40 inch 4k TV will display the same amount of pixels. What is different is how many pixels per inch you are seeing. The monitor will have a crisper image due to its smaller size. Some computer monitors also feature curved screens. This helps replicate the eye’s curvature making for more of the screen to be viewed at once. These types of screens are perfect for gamers looking to have an advantage.

Whatever screen you connect, the devices menu is where you’ll go fine tune these settings liek resolution, colors, etc. Note int he picture above, the device manager for a Macbook is shown on the exquisite Samsung Serif TV (on Amazon). We love this TV, but what we love even more is how easy it is to get up and running on a TV like this when you want to use it as a monitor.

Common Problems With TVs and Monitors

All these differences also translate to more different technical performance issues you’ll need to watch out for. TVs and monitors often have similar issues like screen tearing, which is when a device is not in sync with the refresh rate, and you get artifacts from the screen’s previous frame. This can cause a tearing effect of the image.

Another common problem is motion blur, which is the apparent shaking of images or frames on the screen. And lastly, image ghosting, much like screen tearing, is when artifacts appear as a trail of pixels on the screen. Many monitor manufacturers try to implement features in their products to reduce these issues.

TV manufacturers do as well, but they are not as much of a problem because they are more concerned with consumer viewing. Like we previously mentioned, using a monitor is better for a computer, especially when you are gaming or editing photographs or video. In the end, each device has its purpose.

For Gaming Especially: Input Lag And Response Time

For gaming, every microsecond counts. So, it’s important to consider the input lag and response time of the TV. Input lag refers to the delay between when you input a command and when it appears on the screen, while response time refers to the time it takes for a pixel to change from one color to another.

TVs typically have higher input lag and slower response times than dedicated computer monitors. This can result in a less responsive and less smooth experience when using a TV as a monitor for your computer, especially when playing fast-paced games or doing other activities that require quick and precise movements.

To minimize input lag and improve response time when using a TV as a monitor, look for a TV with a low input lag and a fast response time. Additionally, consider using a wired connection instead of a wireless connection to reduce latency and improve performance.

Should You Use A TV Or A Computer Monitor? (Our Guidance)

We’re going to keep looking at more details in the direction of use cases here, but we can go ahead and give a broad recommendation summarizing our thoughts and experience on this topic. As avid technophiles, and as someone who personally screen-casts to the pictures Serif in this article routinely, our quick and dirty advice on which one you should use is pretty simple.

Pro Tip: For gaming, a TV is great if it has low input lag and a high refresh rate (and these details may not matter for non-competitive games). For detailed computing work, the higher resolution of computer monitors is easy on the eyes, while a TV can work in a pinch, and is better for content consumption.

And if you’re thinking that you’re just going to be streaming content – guess what – many users agree with our thoughts here (forum link): that you’re better off buying a separate streaming device like an Apple TV or a Roku device to be the brain of your existing TV. As always, your actual use cases will define your needs here, so let’s start looking at it from that angle.

The Purpose of a TV vs a Computer Monitor

While both devices may seem incredibly similar, they both serve different purposes. You can use a TV with a computer, and you could use a device meant for a TV, like a gaming console, on a monitor. To get the most out of each product, though, you should use them for their intended purposes.

The purpose of a Smart TV is for streaming shows, cable TV, and using DVD and BluRay players. A TV is designed for consuming media that doesn’t require a high refresh rate, and this means that video gaming actually benefits from a monitor as well. The higher refresh rate and resolution lends it to multiplayer gaming, work and video calls. And especially for creatives, the colors and refresh rates mean that editing goes much better on a high-quality monitor.

Modern TVs come with a lot more features than a computer monitor. For instance, if you have a smart TV, you can access streaming apps and other software through the built-in OS. These features greatly enhance the usability of the device. Computer monitors have been improving over the years, but because they are used predominantly with computer units, they don’t need extra features and don’t typically have Smart TV features built-in.

It is also the case that because monitors work with computers, they lack a lot of the connections you might get from a TV. It is always the case that you need to have something hooked up to the monitor to get any image. While this was true for older TVs, you only need to have the power plugged in to get things to work with a smart TV.

When To Use a TV vs. a Computer Monitor

While it may seem like the obvious answer is to use your monitor with a computer and your TV for your living room, there are situations where this may be to your disadvantage. There are certain circumstances where using a monitor or a TV may be better suited for your viewing needs.

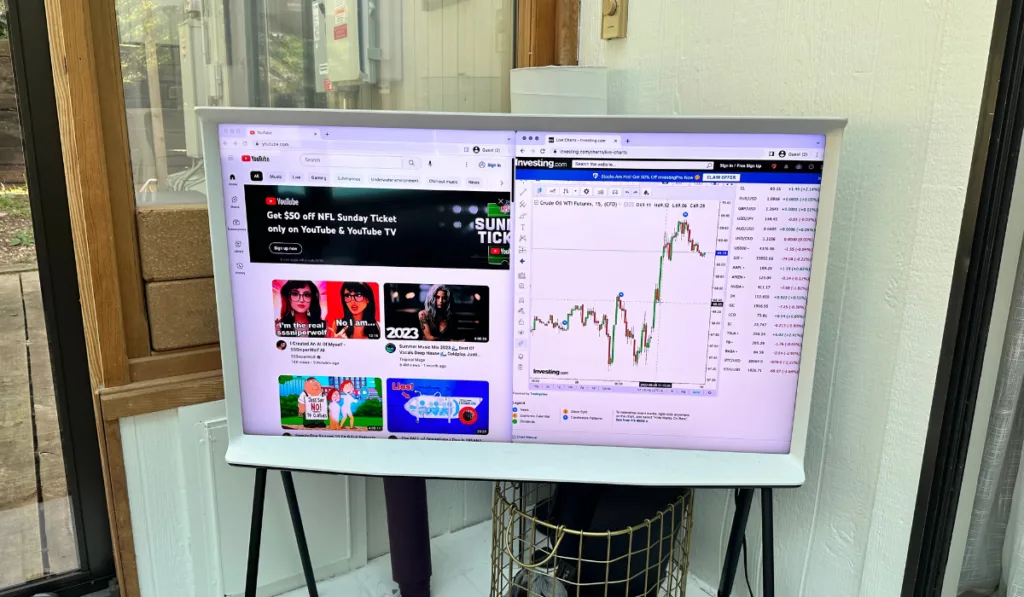

In general, you can do whatever you want! Note in the picture above, Youtube next to stock charts is just one example of content you may want to put on a bigger screen like this. But let’s think through some more use cases.

When To Use A TV

A good time to use your TV while you’re using your devices is when you’re far away, say, for example, sitting on a couch. Additionally, If you find yourself watching movies or TV shows, a television will probably make for a much better viewing experience. And lastly, it’s probably best to use a TV when more than one person is going to watch the screen.

In these cases, you may even want to hook your computer up to a TV for better viewing. Many home theatre systems work this way. If your laptop or computer system has an HDMI out, you can easily use an HDMI cable to connect the two units quickly.

When To Use A Monitor

A great time to use a monitor is when you’re sitting close to the screen, like when playing video games, for example. Most people prefer to sit closer to the screen while playing video games in comparison to when you sit back much further to watch a movie or a TV program. Another good time to use a monitor is when you’re using software, like Logic Pro X, Final Cut Pro, or some other software. And lastly, if you’re trying to get a crisper, more detailed image, a monitor might be the best bet.

Why Monitors Are Better Than TVs for Gaming

If you want an edge while playing fast-paced video games, you may want to consider using a high-quality monitor, like this Alienware 25 inch Gaming Monitor (on Amazon). This is even the case if you are using a gaming console. While it is true that consoles are designed for use with a TV, you can still connect them to your computer monitor for a more detailed gaming image.

Seeing All The Angles

So, now you know TVs and monitors can be used interchangeably, given you have the right connections. However, you should be aware of the intended purpose of each device, their similarities and differences, and their pros and cons. In general this is a pretty easy and useful connection to make though!